Zero-shot Minecraft Planning Agent: KB-Enhanced LLM-based PDDL Generation

We tackle long-horizon planning in Minecraft by proposing KB-enhanced PDDL: retrieve prior knowledge from Wiki, construct or refine a PDDL domain, and reuse it for planning—reducing repeated LLM calls, improving success rate, and lowering token usage and execution time.

1. Background: Why long-horizon planning in Minecraft is hard

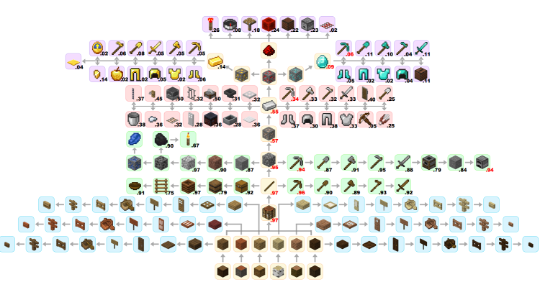

Minecraft tasks often require multi-step dependencies (e.g., crafting chains). The technology tree creates long plans with complex prerequisites, making zero-shot success difficult.

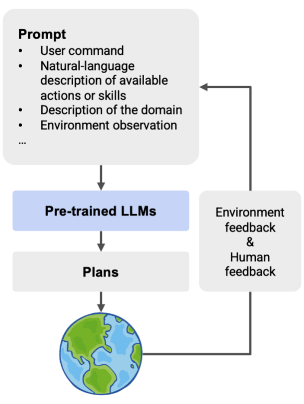

2. LLMs in task planning: Planner vs. World Model

LLMs can be used in two roles:

- LLM as Planner: generate plans / high-level action sequences.

- LLM as World Model: model environment dynamics to support planning.

| LLM as Planner | LLM as World Model |

|---|---|

|

|

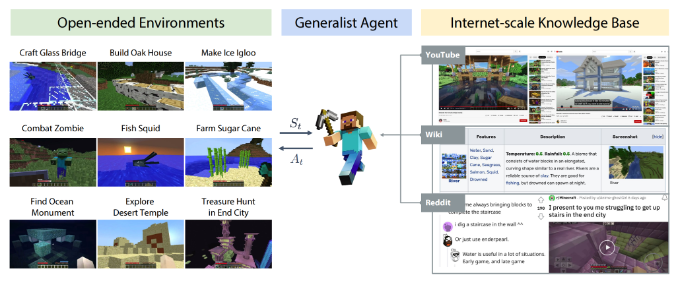

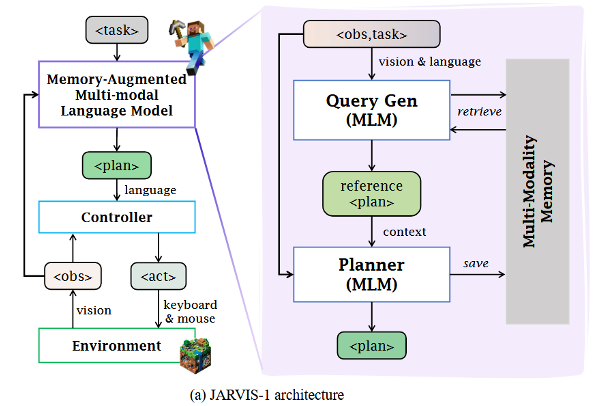

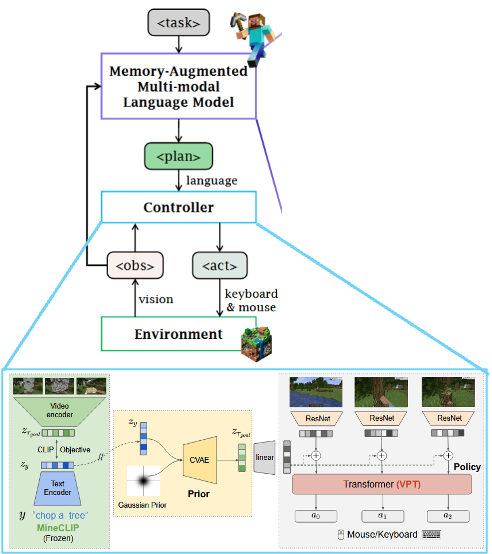

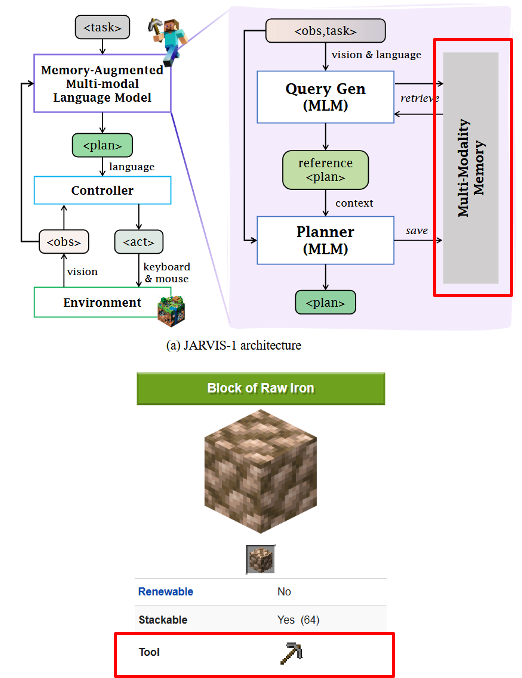

3. Jarvis-1 overview: Hierarchical planning with memory

Jarvis-1 is a hierarchical approach where a memory-augmented MLLM retrieves reference trajectories and uses in-context learning to generate the next high-level action. The controller (e.g., Steve-1) maps high-level actions to real-time mouse/keyboard actions via a pretrained skill library.

4. Motivation: Rethinking Jarvis-1

Q1: Where does prior knowledge come from?

Jarvis-1 relies heavily on a trajectory memory database (human-crafted / oracle / agent-generated). In a strict zero-shot setting, missing dependency knowledge can cause failures—for example, trying to dig raw iron without being equipped with the required tool. :contentReference[oaicite:5]{index=5}

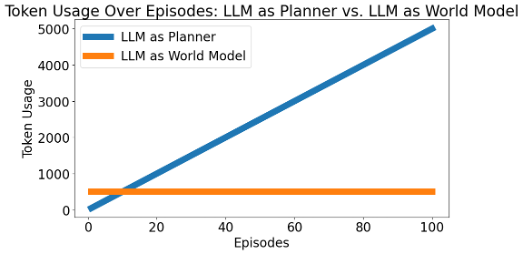

Q2: Is “LLM as planner” the best choice?

Querying an LLM for every action in every episode is expensive. If we run many episodes of the same task, LLM-as-policy scales like:

#episodes × #actions × tokens/action

Whereas PDDL planning can reuse a domain and only pay the cost of a small number of domain improvements:

1 × (#PDDL improvements):contentReference[oaicite:6]{index=6}

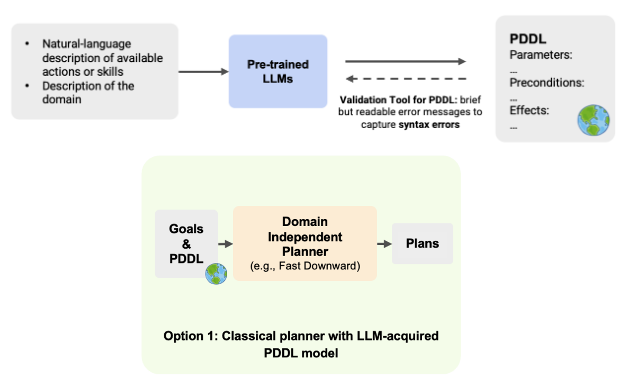

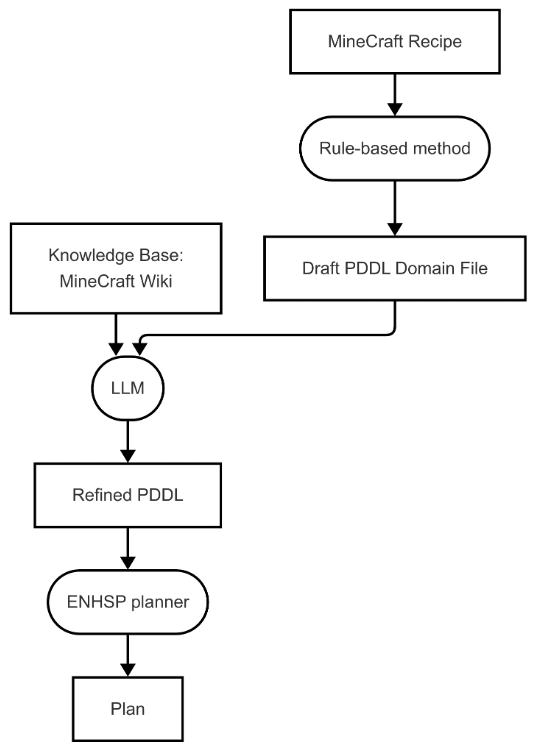

5. Method: KB-enhanced PDDL Generation

We answer the two questions above as follows:

- Prior knowledge can be retrieved from Wiki.

- This knowledge can be converted into a reusable PDDL domain file, so we can plan without querying the LLM at every step.

- The resulting planned actions are executed by the controller (e.g., Steve-1).

5.1 Pipeline (high-level)

- Retrieve relevant crafting/dependency knowledge from Wiki (KB).

- Construct/Refine a PDDL domain (actions, preconditions, effects).

- Plan with a PDDL planner to obtain a high-level action sequence.

- Execute planned actions via the controller in the Minecraft environment.

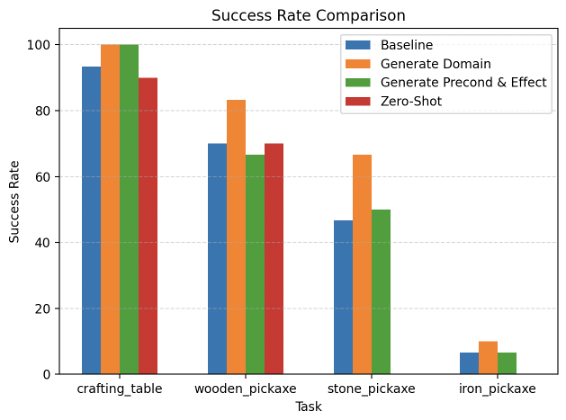

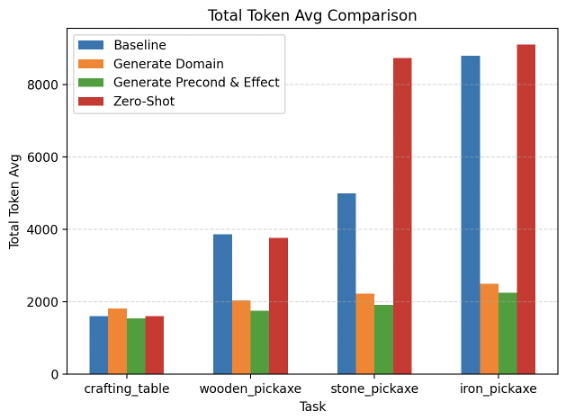

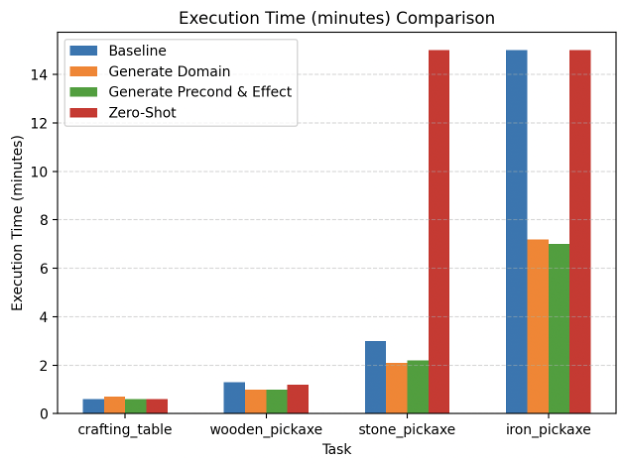

6. Experiments

6.1 Task settings

We evaluate on increasingly challenging tasks:

- Get crafting table

- Get wooden pickaxe

- Get stone pickaxe

- Get iron pickaxe (most challenging) :contentReference[oaicite:9]{index=9}

6.2 Method settings (baselines)

We compare against:

- Baseline: Jarvis without memory

- Generate Domain: LLM generates

domain.pddl - Generate Precond & Effect: LLM generates domain preconditions & effects

- Zero-shot: Jarvis without memory and without dependency knowledge

6.3 Metrics

We report:

- Success rate

- Token usage (including plan token vs skill token breakdown)

- Execution time

7. Results & Discussion

From the presentation results:

- Higher success rate with KB-enhanced PDDL

- Lower token usage, especially by reducing repeated planning-time LLM queries

- Faster execution time

- More controllable and interpretable planning, reducing hallucination or erroneous actions

8. Demo: In-game execution

We include an in-game execution demonstration comparing:

- Jarvis-1 using LLM

getskills - Jarvis-1 using KB-enhanced PDDL

| Baseline | KB-enhanced PDDL |

|---|---|

|

|

9. Advantages

Our approach:

- Uses fewer tokens while achieving higher success rate

- Reduces hallucination / erroneous actions

- Speeds up execution time

- Makes planning more controllable and interpretable

10. Limitations & Future Work

10.1 Quantity-insensitive planning

A plan can be procedurally correct but still fail due to insufficient item quantities. A possible solution is quantity-aware planning.

10.2 Text-only action decisions (no visual cues) + luck-driven mining

Current decisions rely on text-only signals; mining can be luck-driven, so success can depend on chance. A future direction is action decisions based on both text and visual information. :contentReference[oaicite:16]{index=16}

References

- Fan, Linxi, et al. MineDojo: Building open-ended embodied agents with internet-scale knowledge. NeurIPS 2022. :contentReference[oaicite:17]{index=17}

- Wang, Zihao, et al. Jarvis-1: Open-world multi-task agents with memory-augmented multimodal language models. TPAMI 2024. :contentReference[oaicite:18]{index=18}

- Guan, Lin, et al. Leveraging pre-trained large language models to construct and utilize world models for model-based task planning. NeurIPS 2023. :contentReference[oaicite:19]{index=19}

- Lifshitz, S., et al. Steve-1: A generative model for text-to-behavior in Minecraft. NeurIPS 2023. :contentReference[oaicite:20]{index=20}